Methods Acting

Burkard Polster and Marty Ross

The Age, 3 February 2014

Welcome back! Your Maths Masters are here, refreshed and ready to bring you another year of pretty, playful mathematics. Along with, of course, our trademark grumpiness.

Maybe it's bad form to start off the year in a grumpy manner, but perhaps playfulness is also inappropriate. Thousandsof Victorian students are embarking upon the very serious business of VCE mathematics. We empathise with them, andwe're very sorry that they must navigate so much silliness.

The sad truth is thatSpecialist Mathematicsis not all that special. (Those who disagree are invited to compare the Specialist exams with New South Wales'Extension 2 HSC mathematics exam.) However, our much larger gripe is withMathematical Methods, a subject sorely lacking in method, or meaning.

We've battled with Methodsbefore. Indeed, your Maths Masters take credit for the seeming extinction of a particularly inane type of exam question. Alas, there is so much inanity and so little time. Over the summer we took a careful look at the 2013 Methods exams; now, we're grumpy.

It is impossible to cover here all that is wrong with the exams. In brief, thefirst examand the first half of thesecond exam(for which a super-duper calculator is permitted) consist of formulaic, simple-step questions, a number of them contrived to the level of mathematical meaninglessness. The questions offer almost no challenge to a good student, and consequently this testing is akin to an Olympics shooting event, where a nervous misfire or two can mean the difference between a gold medal and an also-ran score.

The second half of the second exam consists of more involved, multi-part questions. Unfortunately, most of these questions are appalling, almost transcendent in their pure, artificial ugliness.

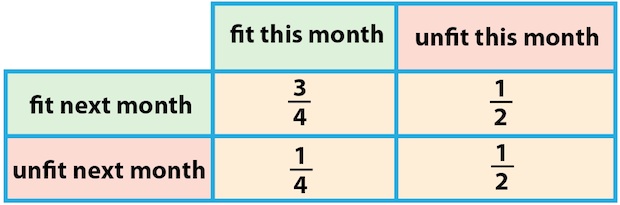

Let's consider one question in some detail, the problem of the FullyFit company. Once per month, FullyFit sets each of their clients some physical exercises, and if the client completes the exercises in under three minutes then they are declared "fit". (The actual wording on the exam is clumsy and needlessly jargonistic.) After two (rather contrived) questions on the probability of clients being fit, we have a question concerning Paula: we are told (along with a string of irrelevancies) that if Paula is fit one month then she has a 3/4 chance of being fit the next month; on the other hand, if Paula is unfit then she has only a 1/2 chance of being fit the following month. So far so good (enough), and this information can be summarised in the following table:

The actual exam problem posed about Paula is a little complicated, but the central idea is captured by the following, simpler question: if Paula is fit in January then what are the chances that she will be fit in March?

To answer this question, we have to also consider the chances of Paula being fit in February, and we are led to two possible sequences: Fit-Fit-Fit and Fit-Unfit-Fit. The chances of the first sequence occurring are 3/4 x 3/4 = 9/16, and the second sequence has a 1/4 x 1/2 = 1/8 chance. That totals to a 11/16 chance that Paula will be fit in March. Problem solved!

Maybe.

The trouble is not with the calculation. The trouble is that there is something intrinsically wrong with the question itself, and the same holds for the actual exam question. We are told how Paula's fitness in one month affects the chances of her being fit the next month. But what if we know, for example, that Paula was fit in both January and February? It would be natural to assume that the extra month of fitness increases Paula's chance of being fit in March to something above 3/4. However, the exam question just assumes, implicitly and implausibly, that this is not the case. (In Methods lingo, it is being assumed that Paula's fitness is modelled by aMarkov chain.)

Now we'll agree that this is somewhat nitpicky; it is difficult to word exam questions so they are both precise and clear, and probably all students would have known what was expected. But the fact remains, the implicit month-to-month model of Paula's fitness is simply the wrong model. In any case, things get much worse.

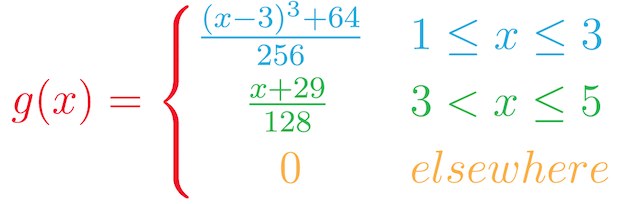

The FullyFit questions that follow are concerned with the amount of time it takes clients in general to complete their exercises, with the spread of times represented by the following probability density function:

If this is intimidating, please do not be concerned. Suffice to say, the from-God-knows-where function defined above has absolutely no connection to reality, and so nothing of interest or sense can follow.

Now, of course your Maths Masters might have just cherry-picked the silliest question on the exam, and of course we have not. We cannot examine every piece of nonsense in the same detail, but we can hint at a few highlights.

We can ponder, for example, why some fellow has decided to make his garden bed follow the graph of a trigonometric function. Or, where Tasmania Jones found his cubic-shaped mountain. (Tasmania Jones is a recurring character in Methods exams. Similar to Indiana Jones, his initial charm has long since dissipated and he is now simply an embarrassment.)

Most fascinating is Tasmania Jones's train, which apparently travels at a speed of k√x–mx2 kilometres per hour, where x is the distance along the track (and where k and m are wrongly referred to as "positive real constants"). It's safe to say that Jones has found the first train in the history of the planet which travels in such a manner.

Why all this absurd modelling? After all, it is perfectly legitimate and much simpler to test mathematics free of any scenario. If the stories are there to lighten the mood, they fail dismally: trudging through laboured descriptions in a high stakes exam is just really annoying. And, if the intent is to test real modelling, the questions are a disaster: the message is that modelling is an arbitrary game, that any mathematical function can be applied in any setting. There's way too much of that around already.

Of course your Maths Masters are not the only ones who are annoyed. We are sure that the vast majority of Methods teachers are frustrated by having to teach in the shadow of such exams. And the Methods students, even if they are unaware of more subtle flaws, must suspect that their subject is largely a game.

As to what the VCE bosses are thinking, why they set such exam questions year after year, who knows? However, there is another, all-too-familiar culprit that is clearly responsible for much of this nonsense.

One of the purported positives of Maths Methods is its use of CAS ("computer algebra system") calculators. These super-duper calculators permit the student to handle all manner of difficult calculation, which means that more interesting and involved situations can be modelled mathematically. Well, that's the theory.

In fact, as we have seen, the modelling typically bears little or no relation to either the real world or good mathematical modelling. Indeed, it seems clear that the absurd models, as well as many of the shorter exam questions, are exactly chosen only to test the students' ability to use the calculator. It is obviously a ridiculous state of affairs, made all the more ridiculous by the fact that these expensive machines are just inferior computers and, once VCE is over, they will simply sit in the cupboard gathering dust. Moreover, this nonsense also comes at a huge extra cost. A number of beautiful and instructive topics in Methods have been reduced to tedious, meaninglessness button-pushing.

Now, arguably this is the wrong time to be criticising Maths Methods: the Australian Curriculum has appeared, and the VCE bosses are accordingly in the process of adapting the VCE. So, things will change. For the better? Maybe. But, we really, really, really doubt it. If you think your Maths Masters are grumpy now, just wait until 2015.

Burkard Polster teaches mathematics at Monash and is the university's resident mathemagician, mathematical juggler, origami expert, bubble-master, shoelace charmer, and Count von Count impersonator.

Marty Ross is a mathematical nomad. His hobby is helping Barbie smash calculators (especially CAS calculators) and iPads with a hammer.

Copyright 2004-∞ ![]() All rights reserved.

All rights reserved.